API vs MCP

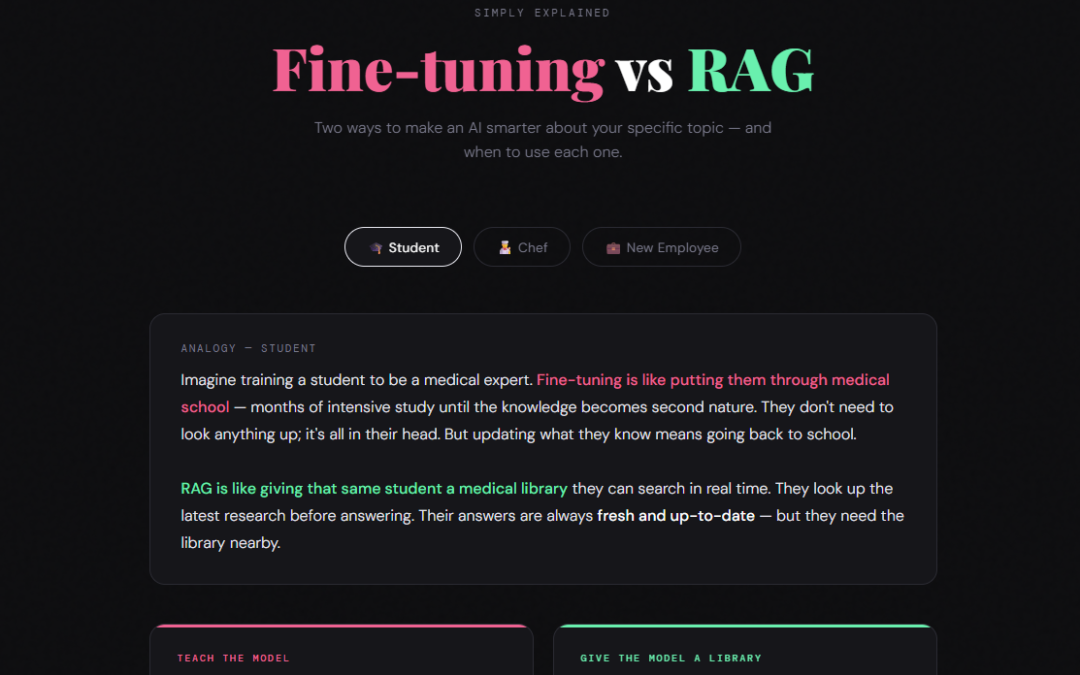

Simply Explained

API vs MCP

Two ways software talks to other software — and why one is simpler than the other.

Analogy — Restaurant

Imagine you’re at a restaurant. An API is like ordering directly from the kitchen — you must know the exact menu, use the exact dish names, and speak the kitchen’s language. Every restaurant has its own unique menu you must learn.

MCP is like having a universal waiter who knows every restaurant’s language. You just say “I’d like something spicy and vegetarian” — and the waiter handles all the kitchen-specific details for you, no matter which restaurant you’re at.

MCP is like having a universal waiter who knows every restaurant’s language. You just say “I’d like something spicy and vegetarian” — and the waiter handles all the kitchen-specific details for you, no matter which restaurant you’re at.

Analogy — Phone Call

An API is like calling a specific company’s support line — you need their exact number, you follow their exact phone menu, and you speak their specific process. Every company has a different system to learn.

MCP is like having a universal translator assistant who makes calls on your behalf. You tell your assistant what you need in plain terms, and they know how to navigate every company’s phone system for you.

MCP is like having a universal translator assistant who makes calls on your behalf. You tell your assistant what you need in plain terms, and they know how to navigate every company’s phone system for you.

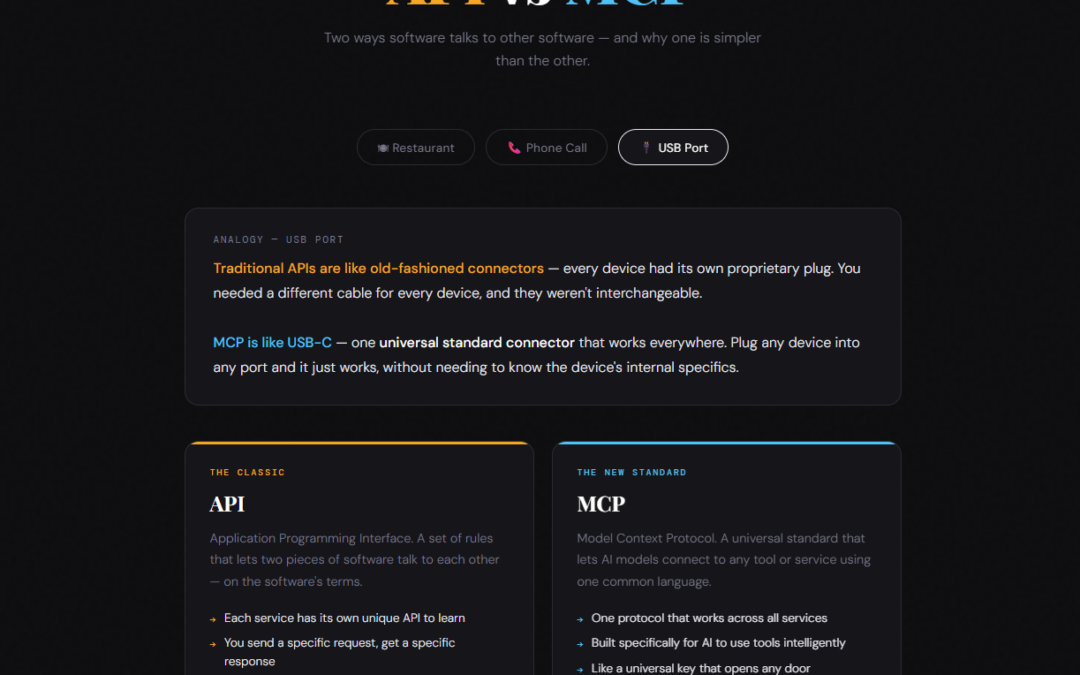

Analogy — USB Port

Traditional APIs are like old-fashioned connectors — every device had its own proprietary plug. You needed a different cable for every device, and they weren’t interchangeable.

MCP is like USB-C — one universal standard connector that works everywhere. Plug any device into any port and it just works, without needing to know the device’s internal specifics.

MCP is like USB-C — one universal standard connector that works everywhere. Plug any device into any port and it just works, without needing to know the device’s internal specifics.

The Classic

API

Application Programming Interface. A set of rules that lets two pieces of software talk to each other — on the software’s terms.

- Each service has its own unique API to learn

- You send a specific request, get a specific response

- Like a custom door with a custom key

- Requires you to know exactly what to ask for

- Been around for decades — extremely common

The New Standard

MCP

Model Context Protocol. A universal standard that lets AI models connect to any tool or service using one common language.

- One protocol that works across all services

- Built specifically for AI to use tools intelligently

- Like a universal key that opens any door

- The AI figures out what to ask — you just give the goal

- Brand new (2024) — rapidly being adopted

How the connections look

API

Your App

──API 1──▶

Google Maps

Your App

──API 2──▶

Slack

Your App

──API 3──▶

Stripe

Every connection is custom — you learn each one separately.

MCP

AI Model

── MCP ──▶

MCP Server

──▶

Any Tool

One universal protocol. The AI talks to an MCP server, which handles all the tool-specific details.

💡

APIs aren’t going away — MCP is built on top of them.

MCP doesn’t replace APIs. Under the hood, MCP servers still use APIs to talk to services.

MCP just adds a standard layer on top so AI models don’t have to learn every API individually.

MCP just adds a standard layer on top so AI models don’t have to learn every API individually.

When would you use each?

Use an API when…

Building a specific integration for your app

You need precise control over every request

Connecting two non-AI systems to each other

The service doesn’t have an MCP server yet

You’re a developer writing direct integrations

Use MCP when…

Connecting an AI model to external tools

You want the AI to use many tools at once

Building AI assistants or agents

You want a plug-and-play setup for AI tools

Enabling AI to take real-world actions for you