Multi-Agent Systems: How AI Teams Are Replacing Single Models

The Evolution of Artificial Intelligence: From Monoliths to Ecosystems

For the past few years, the narrative surrounding Artificial Intelligence has been dominated by the 'bigger is better' philosophy. We have seen massive Large Language Models (LLMs) like GPT-4, Claude, and Gemini grow in parameter count and capability, acting as monolithic engines that attempt to solve every problem thrown at them. However, as developers and enterprise architects, we are beginning to hit a ceiling with this approach. Relying on a single, general-purpose model for specialized tasks often leads to hallucinations, high latency, and astronomical costs. Enter the paradigm shift: Multi-Agent Systems (MAS).

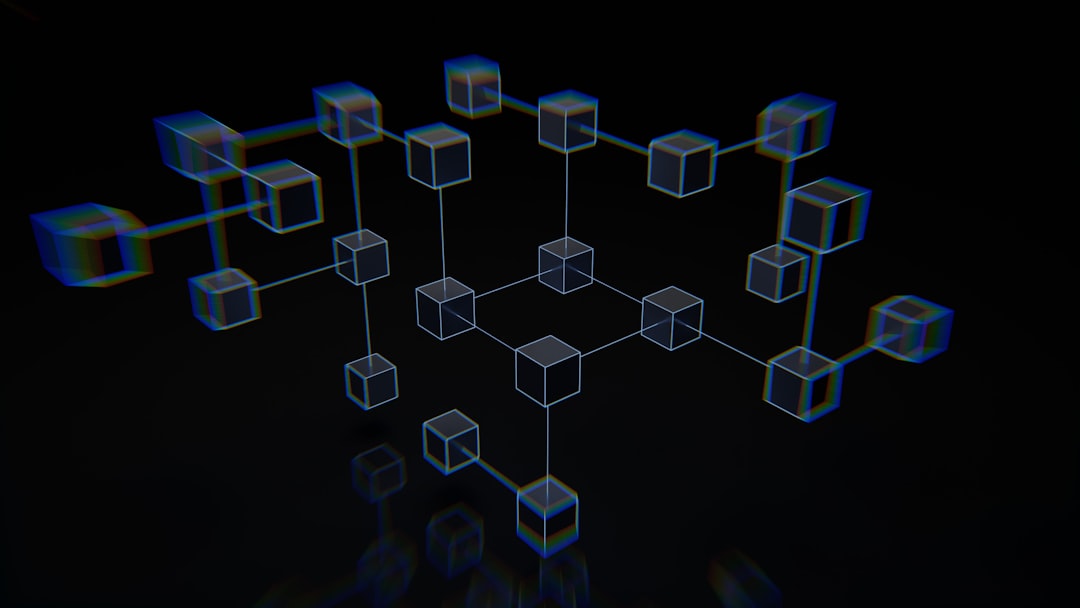

Multi-Agent Systems represent a fundamental move toward modular, collaborative AI. Instead of one model doing everything, we deploy a team of specialized agents, each designed for a specific role. These agents communicate, negotiate, and execute complex workflows, much like a human software development team or a corporate department. This article explores why this shift is not just a trend, but the future of enterprise-grade AI.

What Are Multi-Agent Systems?

At their core, Multi-Agent Systems consist of autonomous entities—agents—that interact within a shared environment to achieve a common objective. An agent is essentially an LLM wrapped in a system prompt, armed with specific tools (like web search, API access, or code execution environments), and given a clear scope of responsibility.

In a typical MAS architecture, you might have:

- The Manager Agent: Oversees the project, breaks down high-level tasks into sub-tasks, and delegates work.

- The Coder Agent: Writes, reviews, and tests code based on specifications.

- The Researcher Agent: Scours the internet for the latest libraries or documentation.

- The Quality Assurance (QA) Agent: Critically evaluates the output and forces iterations if requirements aren't met.

This division of labor mirrors the microservices architecture in software engineering, where decoupling services leads to more maintainable, scalable, and robust systems.

The Core Advantages of Agentic AI

Why should businesses move away from a single, giant model? The benefits are tangible and significant.

1. Enhanced Accuracy Through Specialization

When you task a general-purpose model with writing a complex SQL query, it might get it right 80% of the time. If you task a specialized 'Database Architect' agent—pre-prompted with specific database schemas and optimized for query generation—that accuracy rate climbs significantly. By narrowing the focus, we reduce the 'search space' for the model, leading to higher precision.

2. Error Correction via Peer Review

One of the biggest flaws of LLMs is their tendency to confidently assert incorrect information. In a multi-agent system, we can implement a 'reviewer' pattern. One agent generates the work, and another agent acts as a critic. This self-correction loop catches errors before they ever reach the end user.

3. Tool-Specific Optimization

Not every agent needs to be the 'smartest' model. A research agent might only need a fast, low-cost model like GPT-4o-mini or Llama 3 8B, while the architect agent uses a more reasoning-heavy model. This optimization drastically reduces inference costs.

Implementation: Building Your First Agentic Workflow

To understand the mechanics, let us look at a conceptual implementation using a Python-based framework like CrewAI or AutoGen. These frameworks provide the orchestration layer required for agents to talk to one another.

# Conceptual snippet for an Agent definition

from crewai import Agent

researcher = Agent(

role='Senior Research Analyst',

goal='Uncover groundbreaking trends in AI',

backstory='You are an expert at finding hidden gems in technical papers.',

tools=[search_tool],

llm=gpt_4o_mini

)

writer = Agent(

role='Content Strategist',

goal='Synthesize research into a compelling blog post',

backstory='You are a master of storytelling and technical clarity.',

llm=gpt_4_o

)In this setup, the researcher passes its output directly to the writer. The orchestration engine manages the context window, ensuring that the writer receives the exact information it needs without cluttering the prompt with irrelevant data.

The Challenges of Scaling Agents

While the potential is massive, we must address the hurdles. As the number of agents increases, so does the complexity of the 'conversation.' If agents are not properly constrained, they can fall into infinite loops of negotiation. Furthermore, tracking the lineage of a decision becomes difficult. If an agent produces a bad result, was it the researcher's fault, or did the manager provide bad instructions? Observability platforms are becoming a critical requirement for any enterprise deploying multi-agent systems.

The Future: Agentic Workflows in the Enterprise

The next phase of AI deployment will not be about building the 'biggest' model, but about building the most efficient 'team.' We are moving toward a future where businesses deploy autonomous software departments that operate 24/7. These systems will autonomously handle customer support, code refactoring, data analysis, and even strategic planning.

Key Takeaways for Tech Leaders:

- Start Small: Don't try to build an autonomous company overnight. Start with a two-agent workflow (e.g., a generator and a validator).

- Define Clear Boundaries: Each agent must have a narrow, well-defined scope.

- Prioritize Observability: Use logging tools to track agent interactions to debug performance.

- Cost-Optimize: Match the model's capabilities to the task's complexity.

In conclusion, the era of the 'magic black box' model is ending. We are entering the era of collaborative, agentic intelligence. By treating AI as a team rather than a single tool, we unlock new levels of productivity, accuracy, and autonomy. At TechAlb, we believe this shift is the single most important development for businesses looking to integrate AI into their core operations effectively.